You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

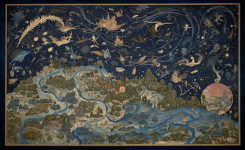

post pictures your computer has generated ( algorithmic art )

Clinamenic

Binary & Tweed

thought better to post in this thread rather than the other one, but these posts from @shiels set me off thinking

my understanding on the images, and i could be wrong, perhaps @william_kent @wektor @Clinamenic or anyone else can weigh in...

...is that the images are being generated out of captions/descriptions of images (plus other metadata like when the image was taken/by who etc) rather than out of images. it's using all language but there's a few add-ons to make the images better. eg inpainting which allow you to isolate the bit you want to transform (and which I was asking a few people about on here a while ago).

I'm more interested in image to video at the moment, getting that sense of transformation, but guiding the AI to do it is difficult.

and it's more noticeable now how prompts are getting more essay-like and that negative prompting has been added.

eg from https://stable-diffusion-art.com/prompt-guide

positive prompt:

negative prompt:

so that is quite a lot of text to define the image.

I've got Stable Diffusion with Deformer running locally now and it's a big difference to 18 months ago when I was using VQGAN+CLIP.

Gone from Google Colab Pro+ account and it was taking 20 mins or so to output this combo:

Initial image:

and this prompt:

"human leg"

into:

youtube.com

youtube.com

whereas with Stable Diffusion, the same initial image and prompt is now giving

youtube.com

youtube.com

and that is being run locally, no internet connection, outputting in less than 5 mins.

Kinda amazing. The spec on this laptop I'm using is high (8GB VRAM / 16GB RAM) but I expect it will be possible to run stuff locally on a phone soon.

The frustrating thing about large language model ai at the minute isnt weird enough, while on the visual side its too weird

my last point isnt related to my first there i just wrote it as i thought it - but why is that? the language stuff is generalisation transposed into a linear structure and narrative while images are a world 'a thosuand words' and this is obv more difficult to represent

my understanding on the images, and i could be wrong, perhaps @william_kent @wektor @Clinamenic or anyone else can weigh in...

...is that the images are being generated out of captions/descriptions of images (plus other metadata like when the image was taken/by who etc) rather than out of images. it's using all language but there's a few add-ons to make the images better. eg inpainting which allow you to isolate the bit you want to transform (and which I was asking a few people about on here a while ago).

I'm more interested in image to video at the moment, getting that sense of transformation, but guiding the AI to do it is difficult.

and it's more noticeable now how prompts are getting more essay-like and that negative prompting has been added.

eg from https://stable-diffusion-art.com/prompt-guide

positive prompt:

Emma Watson as a powerful mysterious sorceress, casting lightning magic, detailed clothing, digital painting, hyperrealistic, fantasy, Surrealist, full body, by Stanley Artgerm Lau and Alphonse Mucha, artstation, highly detailed, sharp focus, sci-fi, stunningly beautiful, dystopian, iridescent gold, cinematic lighting, dark

negative prompt:

ugly, tiling, poorly drawn hands, poorly drawn feet, poorly drawn face, out of frame, extra limbs, disfigured, deformed, body out of frame, bad anatomy, watermark, signature, cut off, low contrast, underexposed, overexposed, bad art, beginner, amateur, distorted face, blurry, draft, grainy

so that is quite a lot of text to define the image.

I've got Stable Diffusion with Deformer running locally now and it's a big difference to 18 months ago when I was using VQGAN+CLIP.

Gone from Google Colab Pro+ account and it was taking 20 mins or so to output this combo:

Initial image:

and this prompt:

"human leg"

into:

human leg (VQGAN+CLIP)

whereas with Stable Diffusion, the same initial image and prompt is now giving

human leg (stable diffusion)

and that is being run locally, no internet connection, outputting in less than 5 mins.

Kinda amazing. The spec on this laptop I'm using is high (8GB VRAM / 16GB RAM) but I expect it will be possible to run stuff locally on a phone soon.

Clinamenic

Binary & Tweed

Clinamenic

Binary & Tweed

Do you know anything about any similar projects but with EEG data instead of fMRI? @wektor @william_kent ?Yeah, Ive been following that research. Its gonna come in really handy for the secret police.

There's some controversy around the research, but EEG to imagined speech looks like a thing https://blog.frontiersin.org/2021/0...nachakel-ganesan-indian-institute-of-science/ which would also be very useful in a torture chamber.

wektor

Well-known member

provided a dataset that's big enough to train a sequence2sequence model that maps EEG to language must surely be within reach, but assuming one crucial thing which is, there is a correlation between two.Do you know anything about any similar projects but with EEG data instead of fMRI? @wektor @william_kent ?

You can map between english and german or english and elvish or whatever, as there must be some correlation on some level. If you tried to map english to a photo of your living room, that's possible too I suppose.

No clue about the accuracy of the EEG readings we can get at this point, see:

It makes me think that if you get your brain plugged into a transformer model by the mind police it would result in a scenario that's less than optimal.

You reckon there's enough accuracy in the spike trains we can read to tell whether a person accused is actually thinking about commiting crime? what if they're scared of crime and are thinking about it? what if they're scared that someone will presume they are thinking about commiting crime?

I don't think we we are going down the route of AI models taking over big management roles as they would often get very very confused and make choices that are extremely disastrous (if not hilarious).

william_kent

Well-known member

Do you know anything about any similar projects but with EEG data instead of fMRI? @wektor @william_kent ?

you're aware of the French "thought to movement" research?

primitive, but I can see that when fully developed the technology as described in this layperson's piece in the Financial Times could have other uses ( I'm thinking thought control of my robot army, etc... )

Using algorithms based on adaptive AI methods, “movement intentions are decoded in real time from brain recordings”, said Guillaume Charvet, head of brain-computer interface research at French public research body CEA.

These signals are then transmitted wirelessly to a neurostimulator connected to an electrode array over the part of the spinal cord that controls leg movement below the injury site

I don't think we we are going down the route of AI models taking over big management roles as they would often get very very confused and make choices that are extremely disastrous (if not hilarious).

This is literally the defining characteristic of management roles.

Slothrop

Tight but Polite

This is literally the defining characteristic of management roles.

Yeah, thinking about it quite a lot of management seems to basically consist of generating statements that are well put together and read nicely and are full of all the expected words but whose relationship to reality is kind-of incidental, which is a very LLM-friendly task.

Clinamenic

Binary & Tweed

Edit: hmm, I thought this would let you upload your own images, but I guess not.

Clinamenic

Binary & Tweed

@william_kent @wektor do you know of any functional VQGAN+CLIP interfaces (or anything similar, which can animate initial images) I can use? Been through half a dozen google collab notebooks now, and they all keep getting new errors.